Scalable Software Verification in Lean 4

Senior Principal Applied Scientist, AWS

Chief Architect, Lean FRO

Signal Shot

The Liquid Tensor Experiment was Lean's moment with mathematics.

Signal Shot is our moment with software.

Lean

-

A programming language and a proof assistant

-

Lean is implemented in Lean. Very extensible.

-

Small trusted kernel. Proofs can be exported and checked independently. Multiple independent kernels.

-

Lean FRO: 20 engineers building Lean full-time.

-

Mathlib: 200,000+ theorems, 750+ contributors, 2.2M+ LoC

-

CSLib: a Mathlib for computer science

-

242,000+ unique installations: VS Code (140K) + Open VSX (102K). 8,000+ GitHub repositories.

-

These slides were written in Lean using Verso, so every code example in the talk is type-checked.

"Lean has become the de facto choice for AI-based systems of mathematical reasoning." — ACM SIGPLAN Award

Mathematics Runs on Lean

Six Fields Medalists engaged: Tao, Scholze, Viazovska, Gowers, Hairer, Freedman.

-

Liquid Tensor Experiment: verified a proof Scholze himself had "lingering doubts" about.

-

Equational Theories Project — Terence Tao

-

Fields Medal sphere packing — AI assisted

-

Fermat's Last Theorem — Kevin Buzzard

-

Mochizuki: formalization of IUT. Lean as a tool for communication.

Signal Shot needs non-trivial mathematics.

The Scaling Problem

Verification with proof assistants has not scaled so far.

Chlipala's group documented this for Rocq. The same story holds in Lean.

The bottleneck is the platform. Not AI capability. Not human time.

Lean@Google Hackathon

Andres Erbsen — Google ISE Formal. Bedrock2. Fiat Cryptography.

A Rocq veteran distilled the scaling problem into a minimal Lean challenge.

A small deeply embedded imperative language.

inductive Binop where

| add | sub

deriving Repr

inductive Expr where

| literal (v: Int)

| var (x: String)

| op (bop : Binop) (e1 e2 : Expr)

deriving Repr

inductive Cmd where

| skip : Cmd

| set : String → Expr → Cmd

| seq : Cmd → Cmd → Cmd

| input : String → Cmd

-- ..

deriving Repr

abbrev Memory := PartialMap Word Byte

abbrev LocalContext := PartialMap String Word

Andres' Challenge — Operational Semantics

inductive IOEvent

| IN : Word → IOEvent

| OUT : Word → IOEvent

abbrev Post := List IOEvent → Memory → LocalContext → Prop

inductive Exec : Cmd → List IOEvent → Memory → LocalContext → Post → Prop

| skip : post es m l → Exec .skip es m l post

| seq : Exec c1 es m l mid →

(∀ es' m' l', mid es' m' l' → Exec c2 es' m' l' post) →

Exec (.seq c1 c2) es m l post

| set : (h_eval : Expr.eval m l e = some v) →

(h_post : post es m (PartialMap.put l x v)) →

Exec (.set x e) es m l post

| input : (∀ v, post (IOEvent.IN v :: es) m (PartialMap.put l x v)) →

Exec (.input x) es m l post

-- ..

theorem Exec.seq_cps (c1 c2 : Cmd) (es : List IOEvent)

(m : Memory) (l : LocalContext) (post : Post)

: Exec c1 es m l (fun es' m' l' => Exec c2 es' m' l' post) →

Exec (.seq c1 c2) es m l post := byc1:Cmdc2:Cmdes:List IOEventm:Memoryl:LocalContextpost:Post⊢ (Exec c1 es m l fun es' m' l' => Exec c2 es' m' l' post) → Exec (c1.seq c2) es m l post

intro hc1:Cmdc2:Cmdes:List IOEventm:Memoryl:LocalContextpost:Posth:Exec c1 es m l fun es' m' l' => Exec c2 es' m' l' post⊢ Exec (c1.seq c2) es m l post; apply Exec.seqc1:Cmdc2:Cmdes:List IOEventm:Memoryl:LocalContextpost:Posth:Exec c1 es m l fun es' m' l' => Exec c2 es' m' l' post⊢ Exec c1 es m l ?midc1:Cmdc2:Cmdes:List IOEventm:Memoryl:LocalContextpost:Posth:Exec c1 es m l fun es' m' l' => Exec c2 es' m' l' post⊢ ∀ (es' : List IOEvent) (m' : Memory) (l' : LocalContext), ?mid es' m' l' → Exec c2 es' m' l' postc1:Cmdc2:Cmdes:List IOEventm:Memoryl:LocalContextpost:Posth:Exec c1 es m l fun es' m' l' => Exec c2 es' m' l' post⊢ Post; apply hc1:Cmdc2:Cmdes:List IOEventm:Memoryl:LocalContextpost:Posth:Exec c1 es m l fun es' m' l' => Exec c2 es' m' l' post⊢ ∀ (es' : List IOEvent) (m' : Memory) (l' : LocalContext), Exec c2 es' m' l' post → Exec c2 es' m' l' post; introsc1:Cmdc2:Cmdes:List IOEventm:Memoryl:LocalContextpost:Posth:Exec c1 es m l fun es' m' l' => Exec c2 es' m' l' postes'✝:List IOEventm'✝:Memoryl'✝:LocalContexta✝:Exec c2 es'✝ m'✝ l'✝ post⊢ Exec c2 es'✝ m'✝ l'✝ post; assumptionAll goals completed! 🐙

Andres' Challenge — A Parametric Program

notation:130 a " ;;\n" b => Cmd.seq a b

notation:150 x " ::= " e:150 => Cmd.set x e

notation:160 a " +' " b:160 => Expr.op Binop.add (Expr.var a) (Expr.var b)

notation:160 a " -' " b:160 => Expr.op Binop.sub (Expr.var a) (Expr.var b)

/-

Generates a command sequence of the form

input b

a := b

a := a + a; a := a - b

...

a := a + a; a := a - b

-/

def generated_cmd (n : Nat) (a b : String) : Cmd :=

.input b ;;

a ::= .var b ;;

repeated_cmds n a b

abbrev spec (m : Memory) : Post :=

fun es m' l =>

m' = m ∧

∃ v : Word, es = [IOEvent.IN v] ∧ l.get "a" = some v

def Goal (n : Nat) : Prop :=

∀ m l, Exec (generated_cmd n "a" "b") [] m l (spec m)

Andres' Challenge — An Example

Each goal is a metavariable: a typed hole in a local context. apply thm unifies the goal with thm's conclusion and emits one new metavariable per remaining premise.

macro "step" : tactic =>

`(tactic| (apply Exec.seq_cps; apply Exec.set; simp_vc; rfl))

example : Goal 1 := by⊢ Goal 1

intro m lm:Memoryl:LocalContext⊢ Exec (generated_cmd 1 "a" "b") [] m l (spec m); simp only [generated_cmd, repeated_cmds]m:Memoryl:LocalContext⊢ Exec

(Cmd.input "b" ;;

"a" ::= Expr.var "b" ;;

"a" ::= "a" +' "a" ;;

"a" ::= "a" -' "b" ;;

Cmd.skip)

[] m l (spec m); unfold specm:Memoryl:LocalContext⊢ Exec

(Cmd.input "b" ;;

"a" ::= Expr.var "b" ;;

"a" ::= "a" +' "a" ;;

"a" ::= "a" -' "b" ;;

Cmd.skip)

[] m l fun es m' l => m' = m ∧ ∃ v, es = [IOEvent.IN v] ∧ PartialMap.get l "a" = some v

-- input b

apply Exec.seq_cpsm:Memoryl:LocalContext⊢ Exec (Cmd.input "b") [] m l fun es' m' l' =>

Exec

("a" ::= Expr.var "b" ;;

"a" ::= "a" +' "a" ;;

"a" ::= "a" -' "b" ;;

Cmd.skip)

es' m' l' fun es m' l => m' = m ∧ ∃ v, es = [IOEvent.IN v] ∧ PartialMap.get l "a" = some v

apply Exec.inputm:Memoryl:LocalContext⊢ ∀ (v : Word),

Exec

("a" ::= Expr.var "b" ;;

"a" ::= "a" +' "a" ;;

"a" ::= "a" -' "b" ;;

Cmd.skip)

[IOEvent.IN v] m (PartialMap.put l "b" v) fun es m' l =>

m' = m ∧ ∃ v, es = [IOEvent.IN v] ∧ PartialMap.get l "a" = some v

intro vm:Memoryl:LocalContextv:Word⊢ Exec

("a" ::= Expr.var "b" ;;

"a" ::= "a" +' "a" ;;

"a" ::= "a" -' "b" ;;

Cmd.skip)

[IOEvent.IN v] m (PartialMap.put l "b" v) fun es m' l =>

m' = m ∧ ∃ v, es = [IOEvent.IN v] ∧ PartialMap.get l "a" = some v

-- a := b

apply Exec.seq_cpsm:Memoryl:LocalContextv:Word⊢ Exec ("a" ::= Expr.var "b") [IOEvent.IN v] m (PartialMap.put l "b" v) fun es' m' l' =>

Exec

("a" ::= "a" +' "a" ;;

"a" ::= "a" -' "b" ;;

Cmd.skip)

es' m' l' fun es m' l => m' = m ∧ ∃ v, es = [IOEvent.IN v] ∧ PartialMap.get l "a" = some v

apply Exec.setm:Memoryl:LocalContextv:Word⊢ Expr.eval m (PartialMap.put l "b" v) (Expr.var "b") = some ?vm:Memoryl:LocalContextv:Word⊢ Exec

("a" ::= "a" +' "a" ;;

"a" ::= "a" -' "b" ;;

Cmd.skip)

[IOEvent.IN v] m ((PartialMap.put l "b" v).put "a" ?v) fun es m' l =>

m' = m ∧ ∃ v, es = [IOEvent.IN v] ∧ PartialMap.get l "a" = some vm:Memoryl:LocalContextv:Word⊢ Word

simp_vcm:Memoryl:LocalContextv:Word⊢ v = ?vm:Memoryl:LocalContextv:Word⊢ Exec

("a" ::= "a" +' "a" ;;

"a" ::= "a" -' "b" ;;

Cmd.skip)

[IOEvent.IN v] m ((PartialMap.put l "b" v).put "a" ?v) fun es m' l =>

m' = m ∧ ∃ v, es = [IOEvent.IN v] ∧ PartialMap.get l "a" = some vm:Memoryl:LocalContextv:Word⊢ Word

rflm:Memoryl:LocalContextv:Word⊢ Exec

("a" ::= "a" +' "a" ;;

"a" ::= "a" -' "b" ;;

Cmd.skip)

[IOEvent.IN v] m ((PartialMap.put l "b" v).put "a" v) fun es m' l =>

m' = m ∧ ∃ v, es = [IOEvent.IN v] ∧ PartialMap.get l "a" = some v

-- a := a + a

stepm:Memoryl:LocalContextv:Word⊢ Exec

("a" ::= "a" -' "b" ;;

Cmd.skip)

[IOEvent.IN v] m (((PartialMap.put l "b" v).put "a" v).put "a" (Word.add (some v) (some v))) fun es m' l =>

m' = m ∧ ∃ v, es = [IOEvent.IN v] ∧ PartialMap.get l "a" = some v

-- a := a - b

stepm:Memoryl:LocalContextv:Word⊢ Exec Cmd.skip [IOEvent.IN v] m ((((PartialMap.put l "b" v).put "a" v).put "a" (Word.add (some v) (some v))).put "a" v)

fun es m' l => m' = m ∧ ∃ v, es = [IOEvent.IN v] ∧ PartialMap.get l "a" = some v

-- skip

apply Exec.skipm:Memoryl:LocalContextv:Word⊢ m = m ∧

∃ v_1,

[IOEvent.IN v] = [IOEvent.IN v_1] ∧

((((PartialMap.put l "b" v).put "a" v).put "a" (Word.add (some v) (some v))).put "a" v).get "a" = some v_1

simp only [List.cons.injEq, IOEvent.IN.injEq, and_true, PartialMap.put_put,

PartialMap.get_put, Option.some.injEq, and_self, exists_eq']All goals completed! 🐙

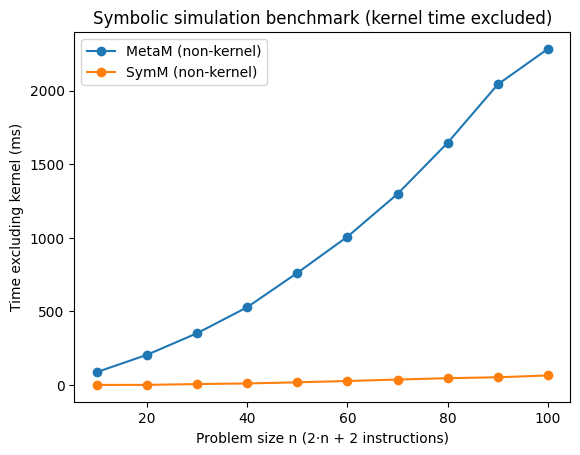

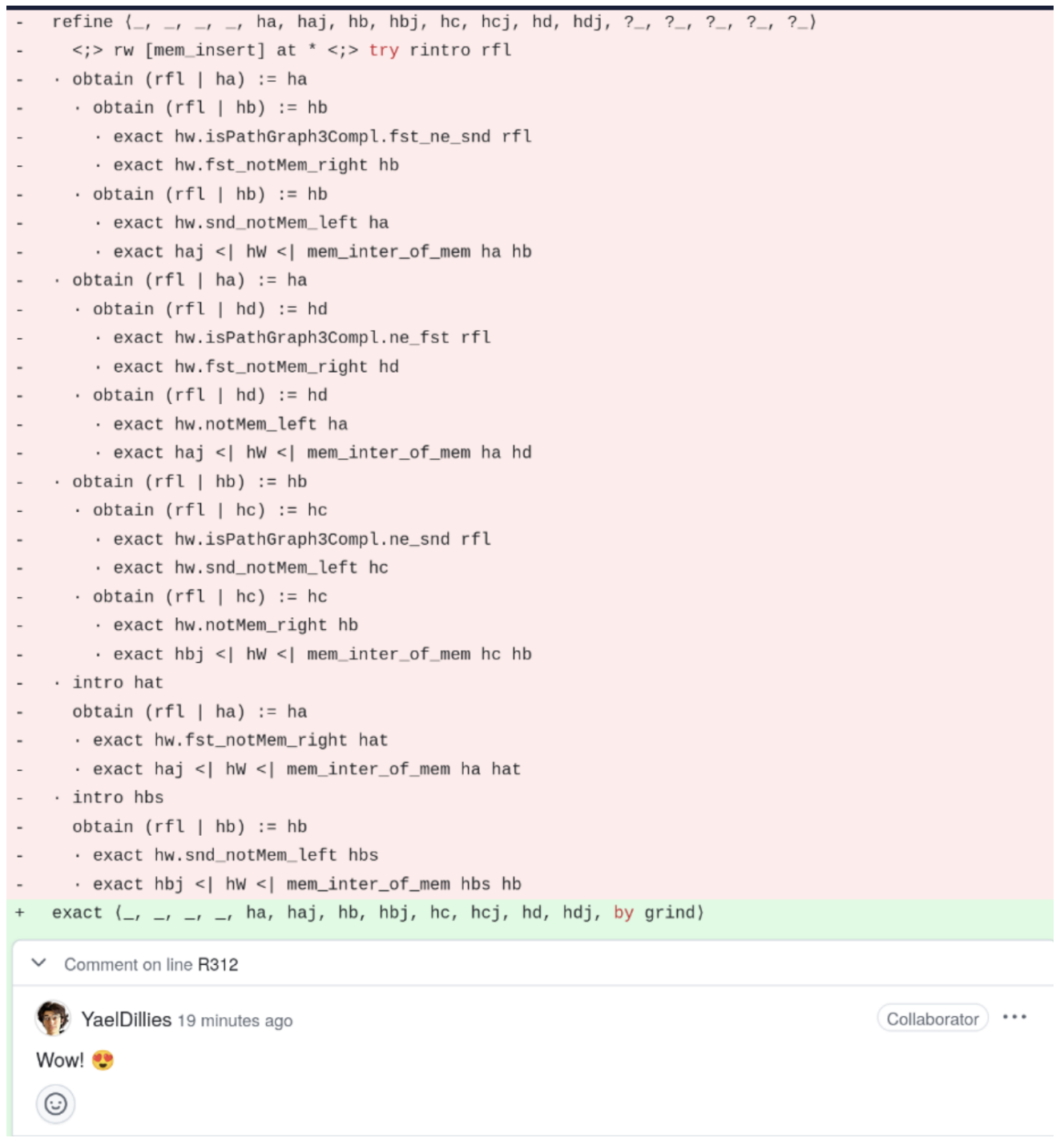

Andres' Challenge — Baseline

With Lean's default tactic framework, MetaM. Superlinear behavior.

| Goal | Tactics | Kernel |

|---|---|---|

goal_10 | 88 ms | 5 ms |

goal_50 | 665 ms | 42 ms |

goal_100 | 2073 ms | 161 ms |

VC Generation

A VC generator turns code and specifications into proof obligations.

Lean-based VC generators:

-

Aeneas — Rust verification via translation to Lean.

-

Velvet — a Dafny-style verifier built in Lean.

-

mvcgen — Lean's VC generator for monadic programs.

All three share the idiom we just saw.

What Must Be Fast

-

Efficient

apply. -

Efficient metavariable management.

-

Preserve term sharing.

-

Reuse

simpresults across VCs and across simulation steps. -

Do not traverse the same subterms over and over.

Son Ho's Observation

Aeneas emits many verification conditions.

Each corresponds roughly to an execution path. They share many hypotheses — and automation redoes the same work on each.

We must carry the state of the proof automation that discharges the easy cases.

SymM

A monad for symbolic simulation and VC generation.

-

Proof state lives in a restricted world.

-

Terms are kept maximally shared.

-

Specialized metadata and user extensions live in the state.

-

Operations are engineered for the VC loop, not for general interactive proving.

The Key Invariant

The local context monotonically grows during symbolic simulation.

-

We introduce local declarations and hypotheses.

-

We do not revert them.

-

We do not delete hypotheses.

-

Shared terms stay shared.

MetaM must support arbitrary proof-state surgery. VC generation does not need that power.

Once contexts only grow and terms stay shared:

-

scope checks around

?m := ebecome cheap, -

pointer equality becomes a valid cache key,

-

automation state survives from one VC to the next.

Shared Terms, Shared Work

SymM caches metadata on shared subterms:

-

maximal free variable, inferred type, level,

-

congruence information,

-

cheap definitional-equality queries.

Every operation preserves the sharing:

-

rewrite, abstract under binders, instantiate, beta-reduce.

The VC loop stops turning one shared DAG into copied trees.

Cheap Matching

Kernel convertibility is powerful: β / δ / ι / ζ / η plus proof irrelevance.

VC generation pays for that power over and over.

SymM preprocesses patterns once, then matches mostly syntactically.

-

No unfolding in the hot path.

-

Avoid fresh metavariables whenever arguments can be assigned immediately.

apply uses a precompiled backward rule. Only genuinely-unknown premises become subgoals.

A Verifier in SymM

partial def solve (mvarId : MVarId) : SymM Unit := do

let exec_cpsRule ← mkBackwardRuleFromDecl ``Exec.seq_cps

let inputRule ← mkBackwardRuleFromDecl ``Exec.input

let skipRule ← mkBackwardRuleFromDecl ``Exec.skip

let setRule ← mkBackwardRuleFromDecl ``Exec.set

let rflRule ← mkBackwardRuleFromDecl ``Eq.refl

let mvarId ← preprocessMVar mvarId

-- `intro m l`

let .goal _ mvarId ← Sym.introN mvarId 2 | failure

let mvarId ← simpUnfold mvarId

-- `apply Exec.seq_cps`

let .goals [mvarId] ← exec_cpsRule.apply mvarId | failure

-- `apply Exec.input`

let .goals [mvarId] ← inputRule.apply mvarId | failure

-- `intro v`

let .goal _ mvarId ← Sym.introN mvarId 1 | failure

let rec loop (mvarId : MVarId) : SymM MVarId := do

let .goals [mvarId] ← exec_cpsRule.apply mvarId | return mvarId

let .goals [mvarId', mvarId, _] ← setRule.apply mvarId | failure

let mvarId' ← simpVC mvarId'

let .goals [] ← rflRule.apply mvarId' | failure

loop mvarId

let mvarId ← loop mvarId

let .goals [mvarId] ← skipRule.apply mvarId | failure

simpFinal mvarId

example : Goal 10 := by⊢ Goal 10

unfold Goal⊢ ∀ (m : Memory) (l : LocalContext), Exec (generated_cmd 10 "a" "b") [] m l (spec m); run_tac liftSym solveAll goals completed! 🐙

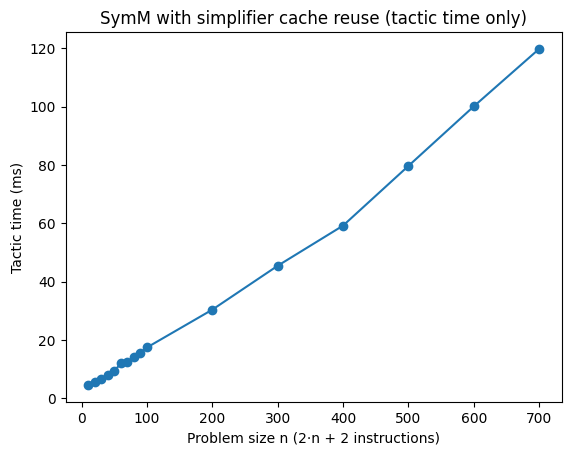

Andres' Challenge — SymM

-

MetaM: 2073 ms at n=100. Superlinear. Fails before n=700.

-

SymM + simp-in-loop: 16 ms at n=100. ~100× faster.

-

Linear out to n=700. 120 ms.

Why Meta.simp Does Not Scale Here

Meta.simp is general-purpose.

VC generation pays for features it does not need:

-

expensive

isDefEqin rewrite matching, -

inefficient binder traversal,

-

contextual control-flow simplification that explodes.

So we built a new simplifier for the actual workload.

A New Simplifier (1/4) — Shared Terms, Shared Results

-

Pointer-keyed caches on maximally shared terms.

-

Pattern-based rewriting instead of general

isDefEqin the hot path. -

Proof construction engineered not to reconstruct giant terms.

-

Cached results persist across repeated

simpcalls inside the VC loop.

def n_5 := f (0 + f (0 + f (0 + f (0 + f (0 + x)))))

A New Simplifier (2/4) — Binders Without Quadratic Pain

-

Descends under

let/have,λ, and∀. -

Custom congruence machinery for binders.

-

Preserve

let/havebinders by default. -

Tracks the changed subterms, not repeated reconstruction of the whole telescope.

def ex_4 :=

have y := 0 + (x + 4); have y_1 := 0 + (x + 3);

have y_2 := 0 + (x + 2); have y_3 := 0 + (x + 1);

y_3 + (y_2 + (y_1 + (y + 0)))

A New Simplifier (3/4) — Control Flow and Simproc

-

Simplify the condition or discriminant.

-

Do not eagerly simplify both branches.

-

Leave symbolic case-splitting to the simulator.

Simproc is the programmable layer VC generators use:

-

prehooks before descent,posthooks after descent, -

compose rewriting, ground evaluation, control-flow, and domain-specific passes.

elab "sym_simp" "[" declNames:ident,* "]" : tactic => do

let declNames ← declNames.getElems.mapM fun s => realizeGlobalConstNoOverload s.raw

let rewrite ← Sym.mkSimprocFor declNames Sym.Simp.dischargeSimpSelf

let methods : Sym.Simp.Methods := {

pre := Sym.Simp.simpControl

post := Sym.Simp.evalGround >> rewrite

}

liftMetaTactic1 fun mvarId => Sym.SymM.run do

let mvarId ← Sym.preprocessMVar mvarId

(← Sym.simpGoal mvarId methods).toOption

example (f g : Nat → Nat) : (if a + 0 = a then f else g) a = f a := bya:Natf:Nat → Natg:Nat → Nat⊢ (if a + 0 = a then f else g) a = f a

sym_simp [Nat.add_zero]All goals completed! 🐙

A New Simplifier (4/4) — Context-Dependent Caching

Some simplifications depend only on the term.

Others depend on the local context: hypotheses, discharge success, binder-local assumptions.

Every result carries a contextDependent bit.

-

Context-free results go in a persistent cache.

-

Context-dependent results go in a transient cache.

-

Reset the transient cache when entering binders and at each top-level

simprun.

We do not give up caching because some rewrites are contextual.

We separate contextual results from non-contextual ones.

cbv

cbv performs simplification that closely mimics call-by-value evaluation and is proof producing.

It is implemented on top of the new simplifier. Wojciech Różowski (Lean FRO).

It works with functions defined via well-founded recursion or partial fixpoints.

def numbers : List String :=

["zero", "one", "two", "three", "four", "five", "six", "seven", "eight", "nine"]

def numberToNumber : Std.HashMap String Nat :=

(0...=9).iter.fold (init := ∅) (fun sofar num => sofar.insert numbers[num]! num)

def sortNumbers (str : String) : String :=

str.split ' '

|>.filter (!·.isEmpty)

|>.map (numberToNumber[·.copy]!)

|>.toList

|>.mergeSort

|>.map (numbers[·]!)

|> String.intercalate " "

example : sortNumbers "five three" = "three five" := by⊢ sortNumbers "five three" = "three five" cbvAll goals completed! 🐙

example : sortNumbers "six one two" = "one two six" := by⊢ sortNumbers "six one two" = "one two six" cbvAll goals completed! 🐙

grind

New proof automation, shipped in Lean v4.22. Kim Morrison and me.

A virtual whiteboard, inspired by modern SMT solvers.

-

Writes facts on the board. Merges equivalent terms.

-

Cooperating engines: congruence closure, E-matching, constraint propagation, guided case analysis.

-

Satellite theory solvers: cutsat (linear integer arithmetic), commutative rings (Gröbner), linarith, AC.

-

Native to dependent type theory. No translation to FOL.

-

Produces ordinary Lean proof terms. Kernel-checkable.

The E-graph is the central data structure. Satellite solvers read from it and propagate back.

grind — Theory Combination

example [CommRing α] [NoNatZeroDivisors α]

(a b c : α) (f : α → Nat)

: a + b + c = 3 →

a^2 + b^2 + c^2 = 5 →

a^3 + b^3 + c^3 = 7 →

f (a^4 + b^4) + f (9 - c^4) ≠ 1 := byα:Type u_1inst✝¹:CommRing αinst✝:NoNatZeroDivisors αa:αb:αc:αf:α → Nat⊢ a + b + c = 3 → a ^ 2 + b ^ 2 + c ^ 2 = 5 → a ^ 3 + b ^ 3 + c ^ 3 = 7 → f (a ^ 4 + b ^ 4) + f (9 - c ^ 4) ≠ 1

grindAll goals completed! 🐙

Three solvers meet at the E-graph:

-

Ring solver derives

a^4 + b^4 = 9 - c^4. -

Congruence closure lifts it to

f (a^4 + b^4) = f (9 - c^4). -

Linear integer arithmetic closes

2 * f (9 - c^4) ≠ 1.

The Nelson-Oppen playbook. Inside dependent type theory. No SMT translation layer.

grind — Typeclass-Parameterized Theory Solvers

Satellite solvers activate automatically when the type classes are present.

-

cutsat—ToIntclass.Int,Int32,BitVec 64,Fin n. -

Ring —

CommRing,CommSemiring,Field,IsCharP,NoNatZeroDivisors. -

linarith— module over the integers. Preorders, partial, linear orders. -

AC — any associative-commutative operator.

example (x y : Int)

: 27 ≤ 11*x + 13*y → 11*x + 13*y ≤ 45 →

-10 ≤ 7*x - 9*y → 7*x - 9*y ≤ 4 → False := byx:Inty:Int⊢ 27 ≤ 11 * x + 13 * y → 11 * x + 13 * y ≤ 45 → -10 ≤ 7 * x - 9 * y → 7 * x - 9 * y ≤ 4 → False

grindAll goals completed! 🐙

-- BitVec 8 is a CommRing of characteristic 256.

-- No bitvector-specific theory needed.

example (x : BitVec 8) : (x - 16) * (x + 16) = x^2 := byx:BitVec 8⊢ (x - 16) * (x + 16) = x ^ 2

grindAll goals completed! 🐙

grind — Annotations and E-Matching

Library authors annotate theorems; grind instantiates them by E-matching — pattern matching modulo the E-graph.

-

[grind =]— use the LHS as the E-matching pattern. -

[grind →]— forward reasoning: patterns from hypotheses. -

[grind ←]— backward reasoning: patterns from the conclusion. -

grind_pattern— custom multi-patterns. -

Constraint system on patterns:

guard,check,is_value,is_strict_value.

@[grind =] theorem fg {x} : f (g x) = x := byx:Nat⊢ f (g x) = x

unfold f gx:Nat⊢ 2 * x / 2 = x; omegaAll goals completed! 🐙

example {a b c} : f a = b → a = g c → b = c := bya:Natb:Natc:Nat⊢ f a = b → a = g c → b = c

grindAll goals completed! 🐙

4,000+ grind uses in Mathlib.

Threading grind State — Grind.Goal

In SymM-based VC generators, tactics thread Grind.Goal instead of MVarId.

A Grind.Goal pairs the MVarId with the grind state. Shared across every VC that inherits it.

The state carries:

-

E-graph — every discovered equality and propagated fact.

-

Instantiated theorems — what E-matching has already fired.

-

Satellite solver states.

SymM Interactive Mode

Humans and AI both need to inspect and steer VC generation.

example (p q : Prop) : p → q → p ∧ q := byp:Propq:Prop⊢ p → q → p ∧ q

sym =>

intro hp hqp:Propq:Prophp:phq:q⊢ p ∧ q

apply And.introp:Propq:Prophp:phq:q⊢ pp:Propq:Prophp:phq:q⊢ q

apply hpp:Propq:Prophp:phq:q⊢ q

apply hqAll goals completed! 🐙

register_sym_simp chainSimp where

post := ground >> rewrite [Nat.add_zero, Nat.zero_add]

example (x y : Nat) (h : x ≤ y) : (1 - 1) + x ≤ 2*y + (1 + 0) := byx:Naty:Nath:x ≤ y⊢ 1 - 1 + x ≤ 2 * y + (1 + 0)

sym =>

simp chainSimpx:Naty:Nath:x ≤ y⊢ x ≤ 2 * y + 1

liaAll goals completed! 🐙

mvcgen — Ported to SymM

Lean's VC generator for monadic programs. Ported to SymM by Sebastian Graf (Lean FRO).

Andres' challenge using Lean monadic code. Linear out to n=1000.

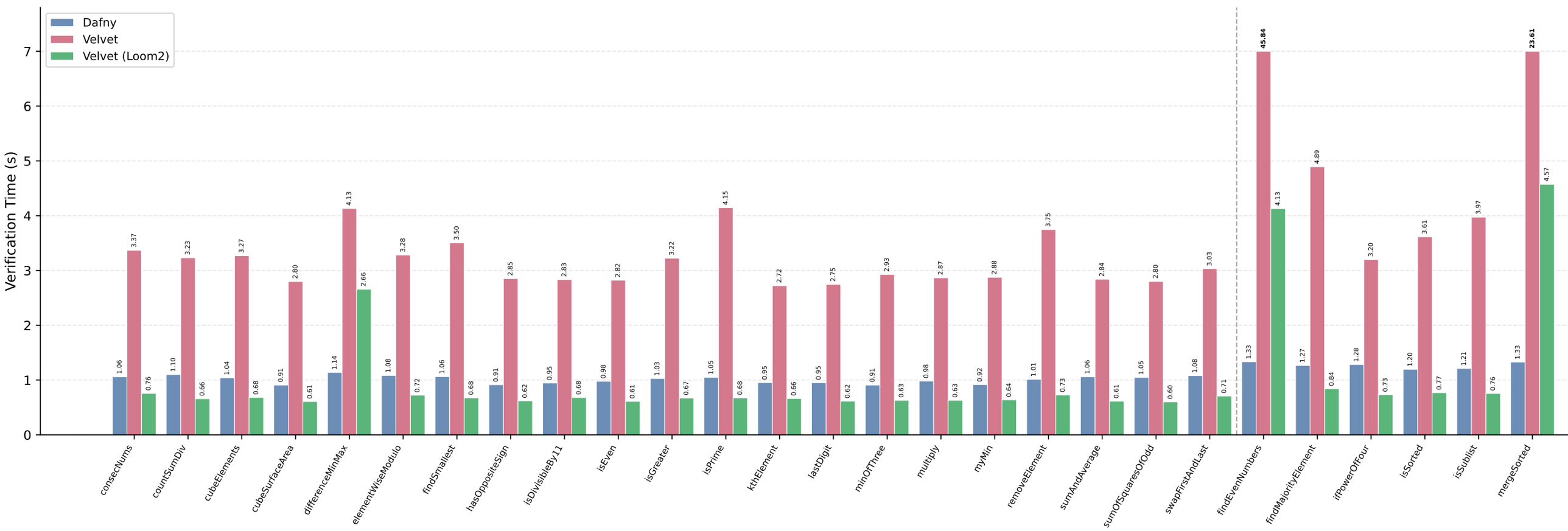

Velvet — Ported to SymM

A Dafny-style verifier in Lean. Ported to SymM by Vova (Lean FRO intern). Preliminary results.

-

Before: Velvet ran ~3× slower than Dafny.

-

After: Faster than Dafny on 24 of 27 benchmarks; competitive on the other 3 (

differenceMinMax,findEvenNumbers,mergeSorted).

One port beat Dafny on 24 of 27. Dafny has a vast TCB.

AI

The platform is ready.

AI is already using it.

zlib

A general-purpose AI converted zlib (a C compression library) to Lean, passed the test suite, and then proved the code correct. No custom prover. No special training.

theorem zlib_decompressSingle_compress (data : ByteArray) (level : UInt8)

(hsize : data.size < 1024 * 1024 * 1024) :

ZlibDecode.decompressSingle (ZlibEncode.compress data level) = .ok data

Round-trip correctness for compress / decompressSingle, machine-checked.

Radix Experiment

10 AI agents built a verified embedded DSL with proved optimizations over one weekend.

-

Zero

sorry— 52 theorems, all complete -

5 verified compiler optimizations

-

Determinism, type safety, memory safety — all proved

-

Interpreter correctness — sound and complete

-

27 modules, ~7,400 lines of Lean

-

I wrote zero lines. Full agent autonomy.

AI on Lean

To date, every AI achieving medal-level IMO performance with formal proofs used Lean.

-

AlphaProof (Google DeepMind) — silver medal, IMO 2024

-

Aristotle (Harmonic) — gold medal, IMO 2025

-

SEED Prover (ByteDance) — silver medal, IMO 2025

Three independent teams. Every one used Lean.

Recent AI Results

-

Axiom: 12/12 on Putnam 2025.

-

DeepSeek Prover-V2: 88.9% on MiniF2F-test. Open-source, 671B parameters.

-

Harmonic: Built the Aristotle AI — gold medal, IMO 2025

None of these systems existed in early 2024.

Automation in the AI Era

-

grindcloses in one step what takes fifty. The AI search tree shrinks. -

Compact proofs = better training data = better AI provers.

-

I built Z3's heuristics by hand. AI does that better now. The algorithmic core still matters more, not less: congruence closure, linear arithmetic, decision procedures.

-

By default: a dumb and fast

grind.

Trust

AI makes proofs cheap.

Trust remains expensive.

Trust — AI Will Exploit Any Unsoundness

"It's really important with these formal proof assistants that there are no backdoors or exploits you can use to somehow get your certified proof without actually proving it, because reinforcement learning is just so good at finding these backdoors." — Terence Tao

Tools with large TCBs are a liability.

Trust in Lean is layered. Each layer shrinks what you have to trust.

-

Everyday: blue check +

#print axioms. -

Replay:

lean4checkerre-runs the kernel on.oleanoutput. -

Gold standard:

comparatorin a sandbox with multiple independent kernels.

TCB = Liability

Other verification tools trust the SMT solver and the VC generator. Large surface.

Lean: trust the kernel. And we have more than one.

-

Standard axioms:

propext,Classical.choice,Quot.sound. -

sorryAxsurfaces incomplete proofs. Custom axioms surface themselves via#print axioms. -

The Lean compiler is not in the TCB for proofs.

Multiple Independent Kernels

arena.lean-lang.org hosts independent kernel implementations.

-

The reference kernel — C++.

-

nanoda— Rust. -

lean4lean— Lean. -

More under way.

Anyone can build a kernel and submit it.

Disagreement between independent kernels exposes bugs that neither would catch alone.

Validate Proofs Independently

lean4checker replays .olean declarations through the kernel in a fresh context. Catches bugs in meta-programs that bypass the kernel or corrupt memory.

Comparator builds the proof in a sandbox, exports it, and replays through nanoda or the reference kernel.

Coming soon: Comparator at live.lean-lang.org.

Additional TCB for Signal Shot (beyond the Lean kernel and standard axioms)

-

Our specifications

-

Charon & Aeneas

-

Rust Compiler

Verification does not eliminate trust. It relocates it to a surface we can audit.

Commitment

Lean will scale. The missing proof automation and features will be built:

-

Custom proof automation: new

grind/symtactics. -

grindattribute sets optimized for VC generators. -

Infrastructure for matching on goals.

-

Scalability. We will keep optimizing Lean and its kernel.

Conclusion

The Lean platform scales and is ready.

SymM and the new simplifier turn a superlinear bottleneck into a linear one.

-

Mathematics on Lean: Six Fields Medalists. Liquid Tensor Experiment. Fermat's Last Theorem. Sphere packing. Equational Theories Project. Tao's Analysis I. Mathlib: 200,000+ theorems.

-

AI on Lean: Every AI achieving medal-level IMO performance with formal proofs used Lean (AlphaProof, Aristotle, SEED Prover). Axiom: 12/12 on Putnam 2025. DeepSeek Prover-V2: 88.9% on MiniF2F. zlib verified by a general AI.

-

Trust: Multiple independent kernels. Comparator. Small TCB.

-

Adoption: 242,000+ unique installs. 8,000+ GitHub repositories.

-

Lean FRO: 20 engineers building Lean full-time.

Thank You

lean-lang.org

leanprover.zulipchat.com

X: @leanprover

LinkedIn: Lean FRO

Mastodon: @leanprover@functional.cafe

#leanlang #leanprover

leodemoura.github.io